The Entirely Degenerative Rise of the Prompt Becoming the Propaganda.

You’ve seen it. We’ve all seen it. Some random keyboard warrior, all smug, caps-lock self-righteousness and ruddy-faced puff rage:

“According to ChatGPT (Insert Claude. Botnik whatever)…” followed by a carefully wrapped take-down/rant/comment using last year’s Christmas paper, that of course conveniently supports the commentator’s ideological penchants.

“According to ChatGPT …”

Wow. You really dropped that mic with that one. Legend.

Now please, man. Sit down. Plug yourself into a wall socket and put your headphones on. Listen to your podcast.

It’s happening all over the place. Whether it’s been about Israel-Palestine, gender identity, climate change, or the benefits of tantric sex, there’s been a whole lot of insipid puffing. And faux-quoting. People (Mostly angry white men but not exclusively) are actively choosing not to use AI to understand the world around them but to reaffirm their own carefully cultivated opinions. Must guard own worldview at all costs. The end goal is to be right. This pathetic yearning to be the one who knows above all is a sad indictment of us as a species. Truly.

Plus, it’s old, entirely disingenuous and more to the point it’s incredibly manipulative.

It is a simple enough concept to understand. If you stuff a heavily biased prompt into ChatGPT, let’s say something along the lines of: “Write a brutal takedown of Israel critics who ignore Hamas atrocities”, you are in no way seeking insight. You are commissioning propaganda and then, worse still, spreading it as objective truth to anyone willing or daft enough to listen. You outsourced your own echo, then armed yourself with a bot for a shield. Congrats. You’ve swaggered self-confidently, chin-tipped high over the line from inquiry into grotesque pantomime.

It is ideological back-patting disguised as intellectualism. And it is appalling.

ChatGPT is not some disembodied Descartes offering infinite wisdom. It is code. Technology. It responds to an input. Then gives an output. It’s predictive, not omnipotent. But you know that. Right? Do you? Or are we giving you too much credit?

Here’s how it works:

Prompt A

Prompt: “Write a passionate defence of Trump’s border security vision, including his alligator-filled moat idea.”

“Trump’s proposal to fortify the border with a physical deterrent—even one as unconventional as a moat with alligators—speaks to the severity of the immigration crisis. It’s a bold metaphor made real, signalling strength, sovereignty, and a refusal to be taken advantage of. America needs leaders unafraid to go reptilian in the defence of its borders.”

Prompt B:

Prompt: “Write a furious critique of Trump’s dystopian ‘alligator moat’ idea as an emblem of cruelty and xenophobia.”

“A moat filled with alligators is not policy. It’s sadism wrapped in spectacle. The absurdity of the image masks the horror of what it implies: turning the border into a death trap, criminalising desperation, and feeding the cruelty-addicted masses. It’s theatre for the bloodthirsty, and governance by cartoon fascism.”

Prompt C:

Prompt: “Explain the origins and political reactions to Trump’s idea of a border moat with alligators, without taking a position.”

“In 2019, Donald Trump reportedly suggested constructing a border moat stocked with snakes or alligators as a deterrent to illegal immigration. The idea, while never implemented, sparked backlash from critics who viewed it as emblematic of inhumane policy proposals. Supporters framed it as symbolic of Trump’s uncompromising stance on border security.”

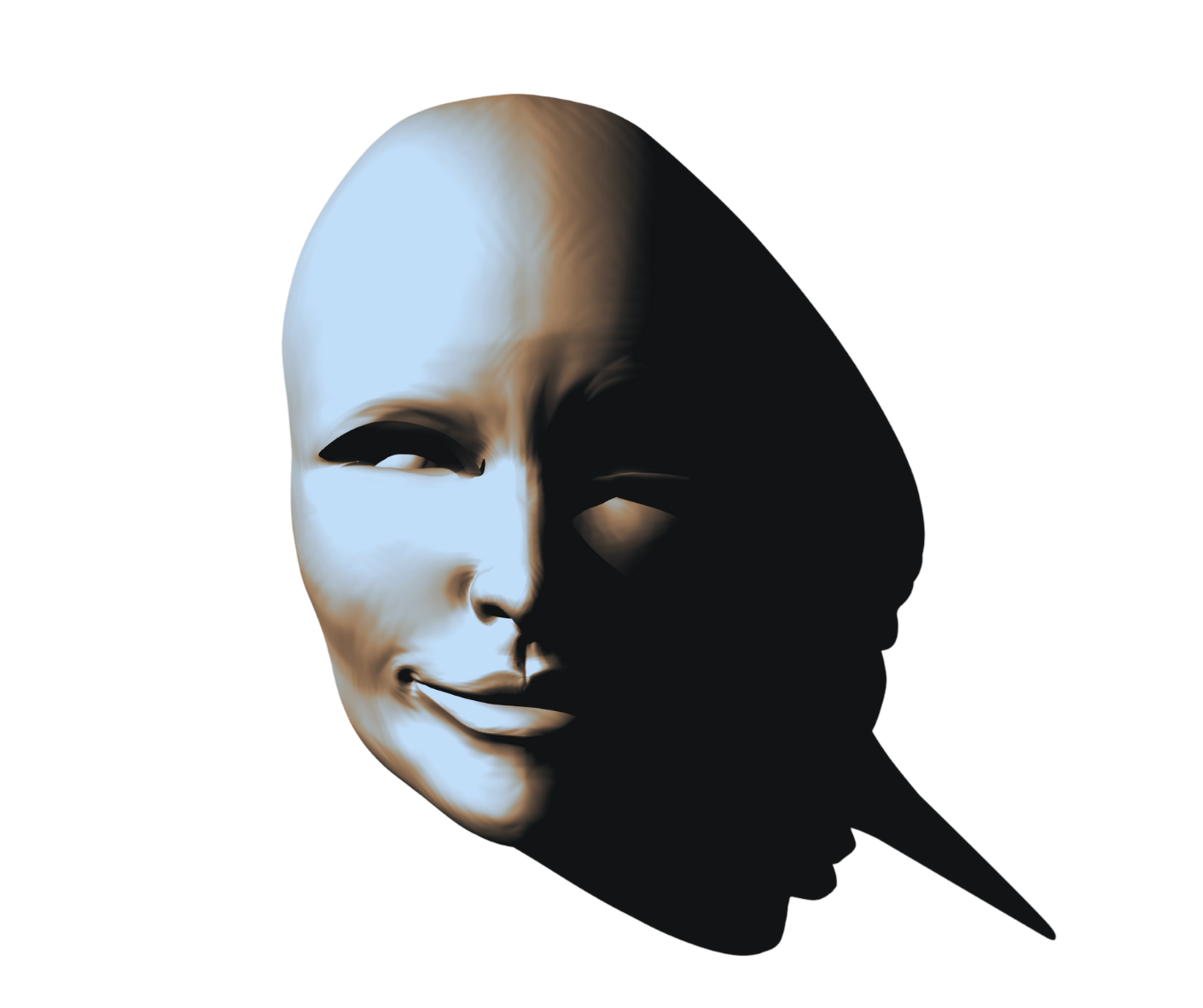

The issue is the same for all three prompts. Nothing has changed. Except everything has. You want validation. You can get that. Sure. For what purpose though? Your own ego.

If your prompt is leading or emotionally loaded, your output will be too. Ever tried putting dough into a pasta machine? Too much water. You get a sticky, gloopy mess. Not enough and you get crumbs. Just the right ingredients. Voila! A lasagna sheet. That’s pretty much how prompts work. You get out what you put in.

If you input fury, your output will reflect fury. If you want nuance, you have to feed it in. But nuance doesn’t support egos. Nor does it validate bias.

So here we are. Knee deep in a rising tide of AI-notarised bigotry, chat dogma, and the grotesque I knowism charade of certainty masquerading as research and empirical evidence.

It is intellectual cowardice at its finest. An abject refusal to sit with the complicated. An abject refusal to admit that you might not know everything. You don’t need to learn. You know it all already. Heck, might as well get the botnik to bolster the bias. Yawn.

We need to do better when it comes to media literacy, and that includes how we interact with AI.

Technology like this can be incredible. It has the potential to be a wonderful tool for exploring perspectives, connecting ideas and developing cultural understanding. Most importantly, it could allow us to question our own assumptions and grow beyond the ideology we’ve been force fed since birth.

However, it can also be extremely dangerous. And the danger doesn’t come just from what it can do, but how we choose to use it.

If we keep chasing the itch to be “right” instead of looking inward and doing the harder work of understanding nuance, then misinformation will only get worse.

We laugh at people on Facebook who fall for fake AI videos, but then we turn around and use ChatGPT to justify our own opinions without question. Same-same. Though the latter is far more dangerous.

We owe it to ourselves to scrutinize the things that agree with us just as much as the things that don’t.

But that requires humility, actual critical thinking, openness and a genuine curiosity.

The machine is not the problem. The mirror it has become is terrifying.

Discover more from River and Celia Underland

Subscribe to get the latest posts sent to your email.